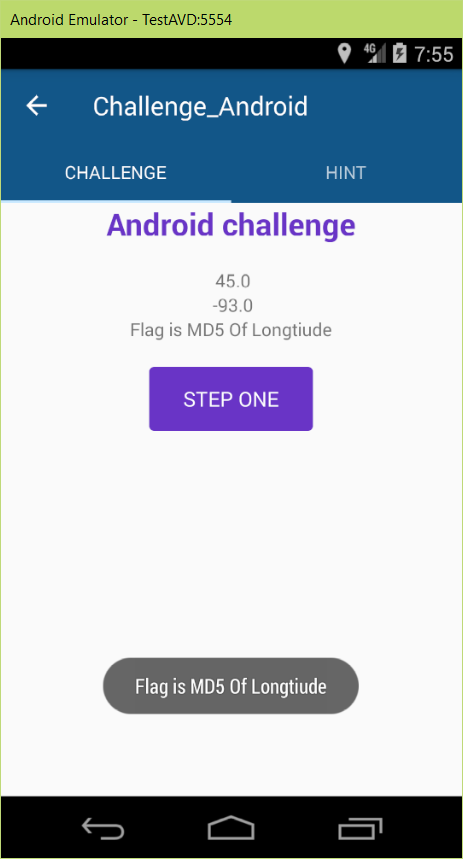

8st SharifCTF Android WriteUps: Vol I

Barnamak WriteUp

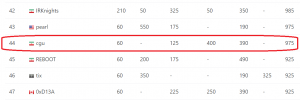

Two weeks ago, SharifCTF was hold and the questions were acceptable. We attended with CGU team name and and gain the 44th place among the 682 attended teams, whom has at least one question solved in the CTF. In this post I described the writeup for the question about reverse engineering of Android app with 200 points (the 3rd question of reverse section).

Catch the CORS Write Up!

Offsec is an Iranian computer security group which holds conferences or CTFs in the computer security area. In their recent challenge, they created a web challenge which is accessible through offsecmag Telegram channel. The challenge started on 16 Dec 2016 and here I will WRITE UP! 🙂

In this post, the write up of the challenge is presented.

Your Radio: Broadcast Yourself!

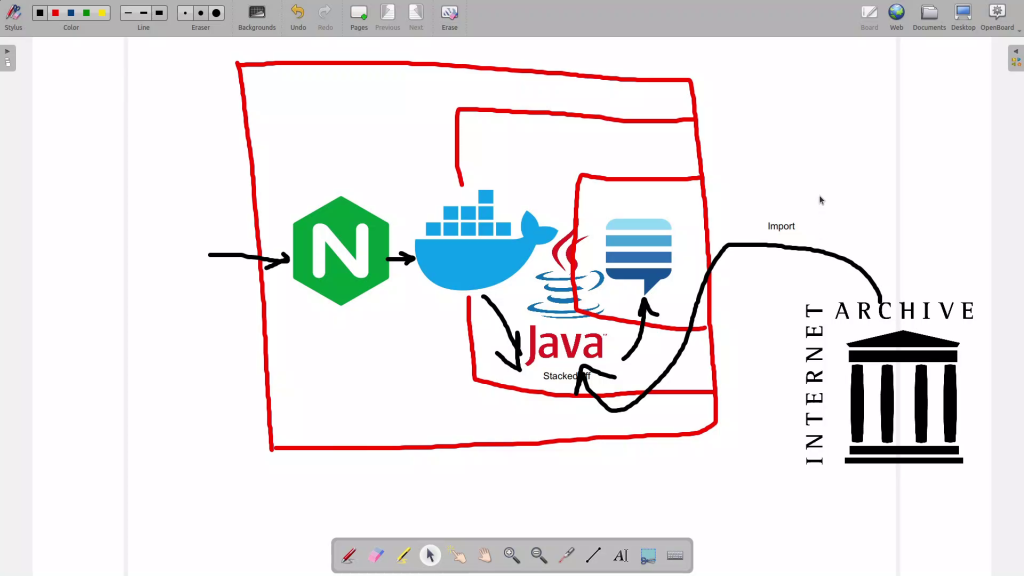

As I told in About Mir Saman section, I love classical musics. I used to listen to online classical radios which are broadcasting the musics 24×7. I was wondering how these services are working. In this post I will show you how to create an online radio broadcast station from your computer.

Onion Harvester: First step to TOR Search Engines

Knowing all possible web paths in the world is the initial step for making a search engine (SE). By means of SE one can analyze the web for the material he/she likes. In normal Domain Name System, each TLD provider (Top Level Domain) can sell or release list of all its domains. As an example .com TLD can sell or release all the domains which are end with “.com“. But the problem is more complicated in TOR (or other hidden service providers). In this post I will talk about my tool named Onion Harvester and how to find initial points for finding hidden services to be crawled.

“Introduction to Cryptography and PGP” Workshop

I have a workshop titled “Introduction to Cryptography and PGP” at Urmia University of Technology. I talked about basic concepts of signature and encryption using asymmetric cryptography. Then I talked about PGP which is one of the main usages of this system. I followed the workshop with configuring Thunderbird and GPG4win for applying PGP in Email system. You may find the contents in my previous post about GPG in Thunderbird and the way PGP works.

The pictures of this session is here.

PGP: How it works?

In “Encrypting Emails using PGP/GPG”, I have described how to configure GPG (PGP) + Enigmail + Thunderbird for sending signed and encrypted emails. But the inner process of PGP is not described. In this post, I will describe how PGP works and the emulation process of PGP will be covered by the nice cryptography tool named cryptoolv2.

Control your home IoT: Remotely and Graphically

In the “Control your home IoT”, the configuration steps for controlling the home IoT system remotely, are described. In this post I will describe how to control your system graphically!

Control your home IoT

By means of IoT lots of things can be connected and controlled by Internet such as smart homes. In this small tutorial I will discuss about personal smart home solutions and how to remotely control them. In small word “Control your Home IoT System over TOR” 🙂